Open-source LLMs Now Match the Quality of GPT-4

People have been hacking together workflows to program alongside LLMs. Seems this trend and desire continues as more models get released.

The biggest alpha in coding with AI right now is:

— Pietro Schirano (@skirano) April 1, 2024

Debug with GPT-4, Code with Claude 3.

GPT-4 is still king when it comes to logic, but it's extremely lazy.

Meanwhile, Claude would do anything you ask.

The duo together is unbeatable.

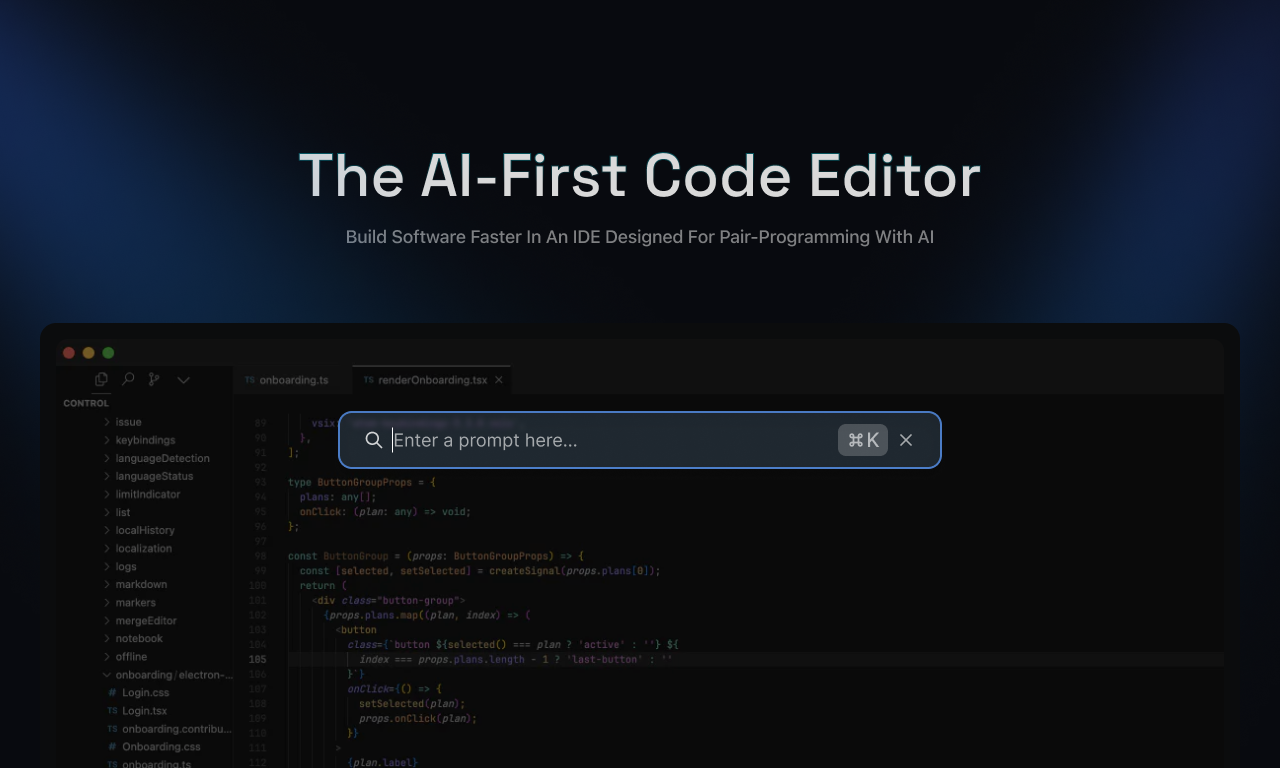

I just setup @ollama with the Cody extension on VS code and it is awesome.

— Vlad (@deifosv) April 3, 2024

Here is how to do it.

✅Install Ollama and download the models you want to use.

✅Install Cody in your VS code

✅ Go to setting of the extension and enable "Cody experimental: Ollama chat

✅When starting… pic.twitter.com/69tsiB2vFp

Mark Zuckerberg is confidently and continuously announcing new Llama models

Me: Bearded Zuck you have to stop. Your smoked meat's too tough. Your swag too different. Your open source LLM is too bad. they'll kill you

— Mike Rundle (@flyosity) April 18, 2024

Bearded Zuck: pic.twitter.com/pdKwMUmpKS

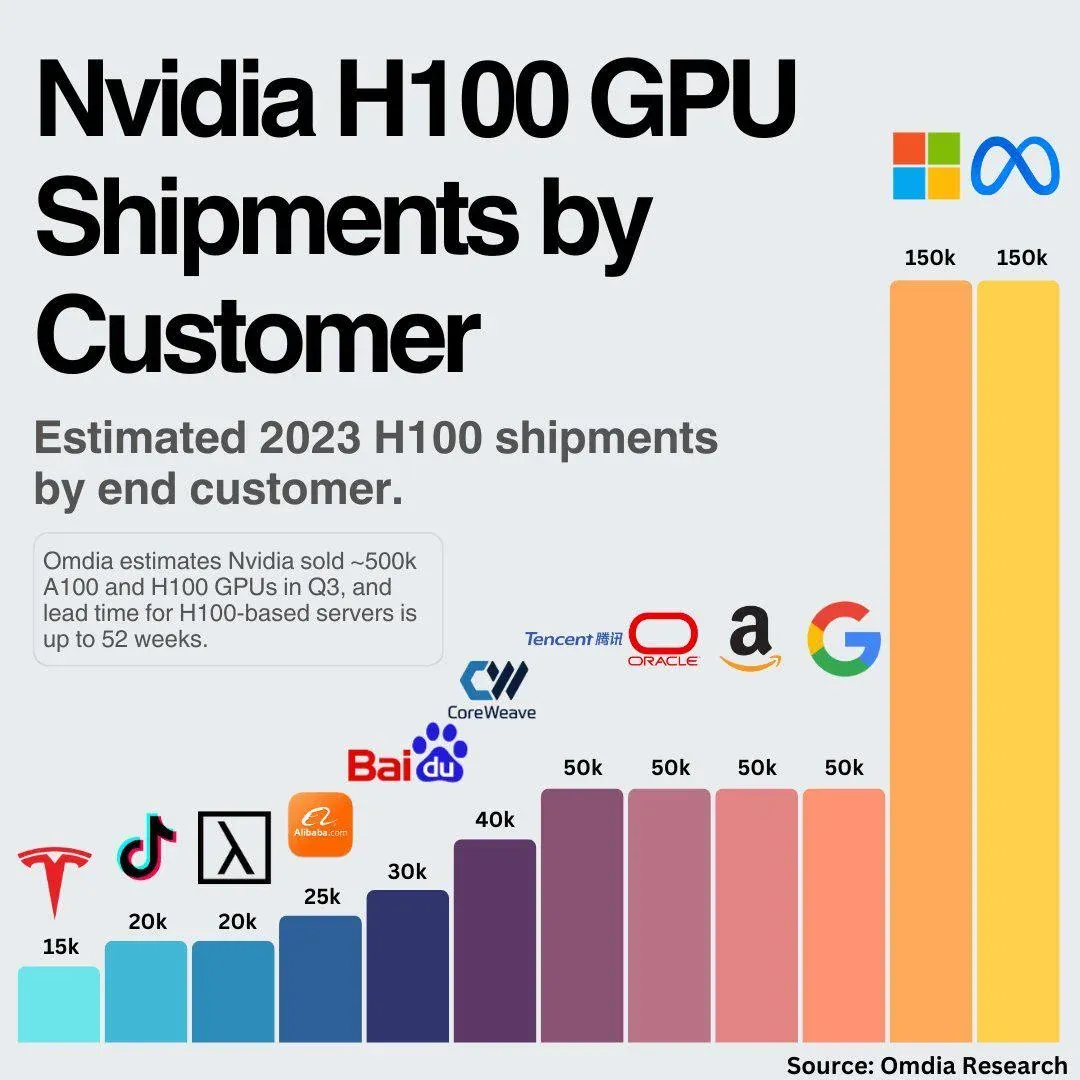

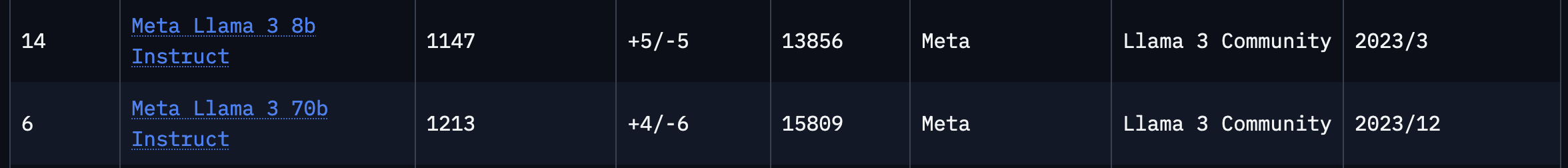

Mark Zuckerberg announced LLAMA 3 that got released by Meta.

People have already been trying it out in different ways, but it is still early. It only just came out a week ago.

Llama 3 8B running 1.89 tokens/s on a Raspberry Pi 5 is pretty CRAZY pic.twitter.com/kK6bHfYu1p

— Adam C.H. (@adamcohenhillel) April 20, 2024

Boom!

— Brian Roemmele (@BrianRoemmele) April 22, 2024

Open source LLaMA 3 (on Groq) vs. closed source GPT-4.

Prompt: code a snake game in Python.

There is no comparison.1

pic.twitter.com/uBwH6XqiWS